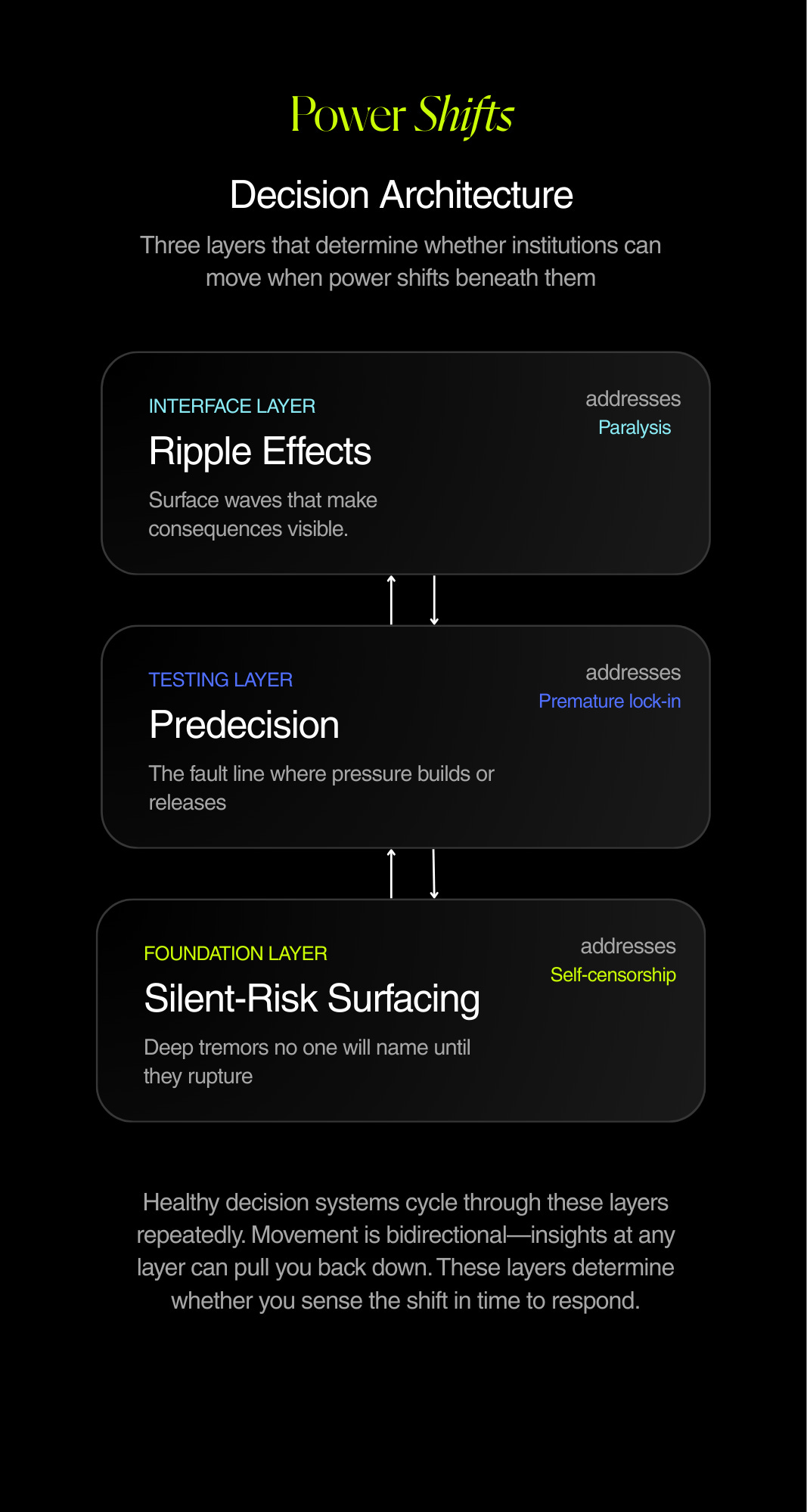

Decision architecture

A visual explainer of the three layers that determine whether you sense the shift in time to respond

Before we get into this week’s post, a quick note of thanks to those of you have have subscribed to the Masters in Public Affairs podcast I launched last week, and for the unsolicited kind words you’ve provided. I’m excited about this project and will be back next week with the next instalment in the series. In the meantime, if you haven’t already subscribed, you can do so here:

I’ve been staring at my last three essays and feeling like I owe you an explanation.

One essay argues that teams freeze because they can’t see consequences. Another argues they commit too early because they stop pressure-testing. A third argues they miss risks entirely because no one feels safe naming them.

Read in sequence, you could reasonably ask: so which is it? Are we moving too slow, too fast, or not at all?

The honest answer: all three. Sometimes in the same organization. Sometimes in the same meeting.

I spent part of this week trying to reconcile this in my own head. Drawing on paper, rearranging boxes, feeling mildly annoyed that the ideas I'd been so confident about individually didn't snap together as cleanly as I wanted.

Slowly, I began to realize that these aren’t competing problems. They’re layers of the same architecture—and the reason they feel contradictory is that most of us think about decisions as a sequence. First you gather information, then you analyze, then you decide, then you act. Linear. Tidy. Wrong.

Real decisions move through layers. And healthy institutions cycle through those layers repeatedly, in both directions.

So I built a visual to clarify this for my own brain, and hopefully for yours too:

At the bottom: Silent Risk Surfacing

This is the work of expanding what's sayable. I’ve seen exceptional analysts imagine extreme scenarios all the time—and then not put them forward for the group’s consideration. Naming something outside the consensus window feels professionally reckless. So people protect their careers, organizations get blindsided, and everyone pretends the scenario was unforeseeable. It rarely was.

In the middle: The Predecision

The stutter step before a recommendation hardens. I've watched teams lock in a frame before the environment finished forming, then spend months defending a position that stopped making sense weeks earlier. The sunk cost isn't money. It's ego. The predecision is the discipline of keeping interpretation elastic long enough to actually test it.

At the surface: Ripple Effects

This is where consequences become visible before outcomes are known. Teams freeze when they can’t see what follows their choices. When you can make downstream effects explicit, the conversation shifts from “are we right?” to “what exposure are we willing to carry?”

The arrows in the visual run both directions because that’s how this actually works. An insight about consequences sends you back to question the frame. A surfaced risk reopens a decision everyone thought was settled. You don’t move through these layers once—you cycle through them until you’re ready to commit.

Where to go deeper

Each layer has its own essay:

The scenarios no one will put in a deck explores why analysts self-censor and what AI changes about permission.

The Predecision examines how teams lock in too early and a technique for keeping frames elastic.

The missing layer in institutional decision-making diagnoses why smart teams freeze and what it takes to create visibility into consequences.

Together, they’re the first three entries in what I’m building toward: a capabilities index for institutions that need to make better decisions under conditions that don’t wait for certainty.

More to come.